|

I am a Ph.D. student in EECS at MIT CSAIL, advised by Professor Pulkit Agrawal. I received my Bachelors degree (Summa Cum Laude) in Mechanical Engineering at Seoul National University. Previously, I was a full-time research scientist at NAVER LABS, developing machine/reinforcement learning algorithms to make robot arms like AMBIDEX perform various daily tasks. I also spent some time at Saige Research as an undergraduate research intern. Email / CV / Google Scholar / Twitter / Blog |

|

|

[2026 Jun 06]🎤 Gave talks and won Best Paper Awards at multiple ICRA 2026 workshops for our Tune to Learn paper. [2026 Apr 27]🎉 Tune to Learn got accepted at RSS 2026! [2026 Apr 14]📄 New preprint! Tune to Learn is out on arXiv — we show how controller gains shape the inductive bias of policy learning. See the twitter thread for a quick overview. [2025 Nov 07]🎙️ Gave a seminar at Prof. Sunghoon Ahn's lab (IDIM) at Seoul National University. [2025 Oct 02]🤖 Hosted the Dexterous Humanoid Manipulation Workshop at Humanoids 2025 in Seoul. [2025 May 20]✈️ Traveling to ICRA 2025 in Atlanta to give an oral talk on DART. [2024 Aug 07]🎙️ Gave a seminar at Kimin Lee's lab at KAIST. [2024 Jul 24]🎤 Gave an oral talk at ICML 2024 in Vienna on Automatic Environment Shaping is the Next Frontier in RL (Top 5%). [2023 Aug]🎓 Left NAVER LABS and started a new journey as a PhD student at MIT CSAIL, working with Professor Pulkit Agrawal. |

|

I want a world where robots can perform every dexterous manipulation task a five-year-old can do with their two hands — and where building such a system is genuinely easy, not a multi-year research effort. Some argue we can get there by following the same playbook that drove rapid progress in vision and language. I'm not so sure. I believe robot learning is fundamentally different from language or vision. A robot is inherently interdisciplinary — a tightly coupled system of hardware, controllers, sensor configurations, data collection interfaces, and learning algorithms — and changing any one of them rewires how the others behave. This makes robotics much closer to biology than to modern ML: the real scientific question isn't just which model is best, but how these many interacting pieces shape what a robot can ultimately learn. And we're still in the early days of building that kind of science. My research aims to build this missing science. I develop open-source infrastructure that the community uses to collect and process robot data at scale, and I use that infrastructure to run controlled empirical studies that reveal how under-examined design choices — from low-level controller gains to data collection interfaces — fundamentally determine learning outcomes. The goal is a future where training a robot to perform a new task is less art and prayer, more engineering: principled, scalable, and reproducible. |

|

|

|

Younghyo Park, Haoshu Fang, Pulkit Agrawal course website (6.S186) / lecture videos This course provides a practical introduction to training robots using data-driven methods. Key topics include data collection methods for robotics, policy training methods, and using simulated environments for robot learning. Throughout the course, students will have hands-on experience to collect robot data, train policies, and evaluate its performance. |

|

|

|

|

I'm passionate about building open-source tools that empower the robotics community and streamline the robot development experience. |

|

Younghyo Park, Pulkit Agrawal github / App Store / twitter / short paper

A complete ecosystem for using Apple Vision Pro in robotics research — stream hand/head tracking from Vision Pro, send video/audio/simulation back for real-world teleoperation, simulation teleoperation, and egocentric dataset recording. |

|

github / docs

An asyncio-based Python library for controlling Franka Emika robots. Provides a high-level, asynchronous interface combining pylibfranka for 1kHz torque control, MuJoCo for kinematics/dynamics, and Ruckig for smooth trajectory generation. |

|

Younghyo Park github

Generate 3D-printable cubes with ArUco or AprilTag fiducial markers on all six faces, then detect their full 6-DoF pose from a single camera image. A two-part pipeline: a generator that produces multi-color 3MF files ready for dual-color 3D printing, and a detector that estimates rotation and translation given camera intrinsics. |

|

* denotes equal contribution. |

|

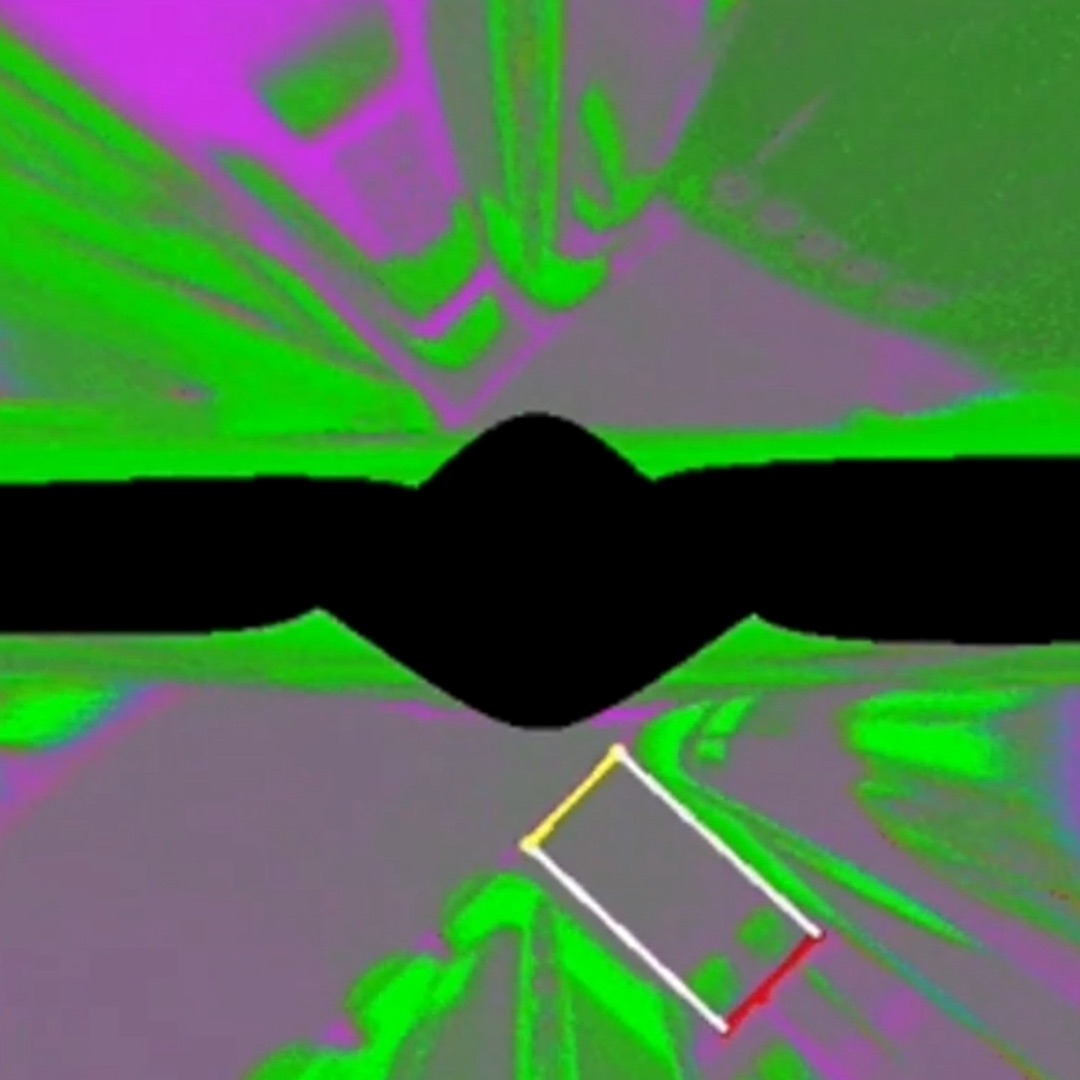

Antonia Bronars*, Younghyo Park*, Pulkit Agrawal RSS, 2026 ICRA 2026 Workshops (Best Paper Awards Beyond Teleop Workshop Best Paper Award Manipulation Robustness Workshop Best Paper Award , Selected Oral Presentation CR2 Contact-Rich Robotics Workshop Selected Oral Presentation ) project page / paper / twitter We show that controller gains shape the inductive bias of different policy learning paradigms, and identify gain regimes that maximize learnability for behavior cloning, reinforcement learning, and sim-to-real transfer. * equal contribution, order determined by coin flip |

|

|

Younghyo Park, Jagdeep Bhatia, Lars Ankile, Pulkit Agrawal ICRA, 2025 project page / twitter DART is a teleoperation platform that leverages cloud-based simulation and augmented reality (AR) to revolutionize robotic data collection. It enables higher data collection throughput with reduced physical fatigue and facilitates robust policy transfer to real-world scenarios. All datasets are stored in the DexHub cloud database, providing an ever-growing resource for robot learning. |

|

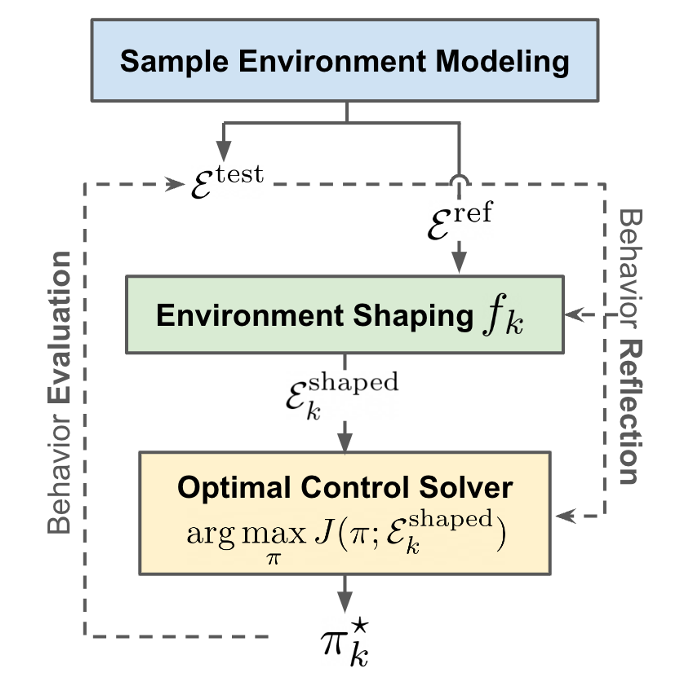

Younghyo Park*, Gabriel Margolis*, Pulkit Agrawal ICML, 2024 (Oral Presentation, Top 5%) project page / video / twitter Most robotics practitioners spend most time shaping the environments (e.g. rewards, observation/action spaces, low-level controllers, simulation dynamics) than to tune RL algorithms to obtain a desirable controller. We posit that the community should focus more on (a) automating environment shaping procedures and/or (b) developing stronger RL algorithms that can tackle unshaped environments. |

|

Sunin Kim*, Jaewoon Kwon*, Taeyoon Lee*, Younghyo Park*, Julien Perez ICRA, 2023 project page / video / twitter An algorithm that can discover diverse and useful set of skills from scratch that is inherently safe to be composed for unseen downstream tasks. Considering safety during skill discovery phase is a must when solving safety-critical downstream tasks. |

|

Younghyo Park*, Seunghoon Jeon*, Taeyoon Lee IROS, 2022 (Winner: Best Entertainment & Amusement Paper Award) project page / video / story / interview / paper Drawing robot ARTO-1 performs complex drawings in real-world by learning low-level stroke drawing skills, requiring delicate force control, from human demonstrations. This approach eases the planning required to actually perform an artistic drawing. |

|

Kyumin Park, Younghyo Park, Sangwoong Yoon, Frank C. Park Transactions on Mechatronics, 2021 paper We detect collisions for robot manipulators using unsupervised anomaly detection methods. Compared to supervised approach, this approach does not require collisions datasets and even detect unseen collision types. |

|

Younghyo Park, Joonwoo Ahn, Jaeheung Park ICRITA, 2022 paper Performs real-time parking slot detection and tracking for autonomous parking systems. |

|

Check out Jon Barron's repository for the template of this website. |