Tune to Learn:

How Controller Gains Affect

Robot Policy Learning

* Equal contribution, Order determined by Coin Flipping

* Equal contribution, Order determined by Coin Flipping

Position controllers have become the dominant interface for executing learned manipulation policies. Yet a critical design decision remains understudied: how should we choose controller gains for policy learning? We argue that gain selection should be guided by learnability: how amenable different gain settings are to the learning algorithm in use.

These findings reveal that optimal gain selection depends not on the desired task behavior, but on the learning paradigm employed.

BC benefits from compliant, overdamped gain regimes. Swapping to the right gain setting can improve success rates by over 30% on the same task with the same data.

Reinforcement LearningRL can succeed across all gain regimes given compatible hyperparameter tuning. The learning algorithm adapts to the dynamics imposed by different gains.

Sim-to-Real TransferSim-to-real transfer is harmed by stiff, overdamped configurations. Compliant gains reduce the sim-to-real gap and improve transfer success.

We have presented a systematic study of how position controller gains shape learning dynamics across three paradigms of modern robot learning. Our findings reveal that gains function not as behavioral parameters, but as an inductive bias that modulates the learning interface between policy and environment.

Whole-Body Tracking Controllers for Humanoids. Modern humanoid robots increasingly use RL-trained whole-body tracking policies as low-level controllers, analogous to the PD controllers studied here. These motion tracking policies tend to be inherently stiff — yet when such policies serve as the low-level interface for higher-level learning, their effective compliance directly shapes the learning dynamics of the policies above them, much as PD gains do in our setting.

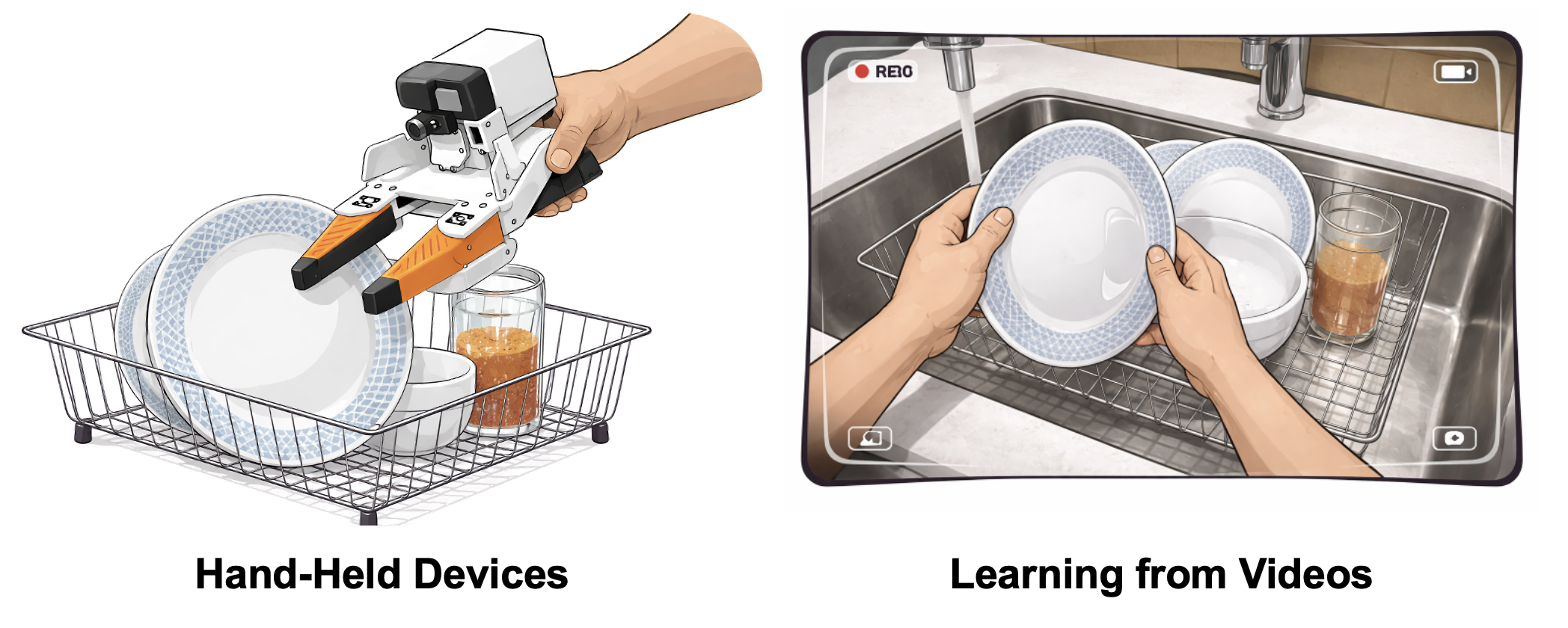

The Implicit Stiff-Controller Assumption in Learning from Wearables or Videos. Paradigms that learn manipulation skills from human videos or wearable devices typically treat observed next-timestep state as the action label, implicitly assuming perfect target tracking — which our results suggest may be suboptimal for imitation learning. Whether these gain-dependent trends generalize to such cross-embodiment or whole-body control settings remains an open question.

@misc{bronars2026tunelearncontrollergains,

title={Tune to Learn: How Controller Gains Shape Robot Policy Learning},

author={Antonia Bronars and Younghyo Park and Pulkit Agrawal},

year={2026},

eprint={2604.02523},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2604.02523},

}Includes 3D simulations, interactive charts, and live demos